原博客地址:http://zengzhaozheng.blog.51cto.com/8219051/1379271

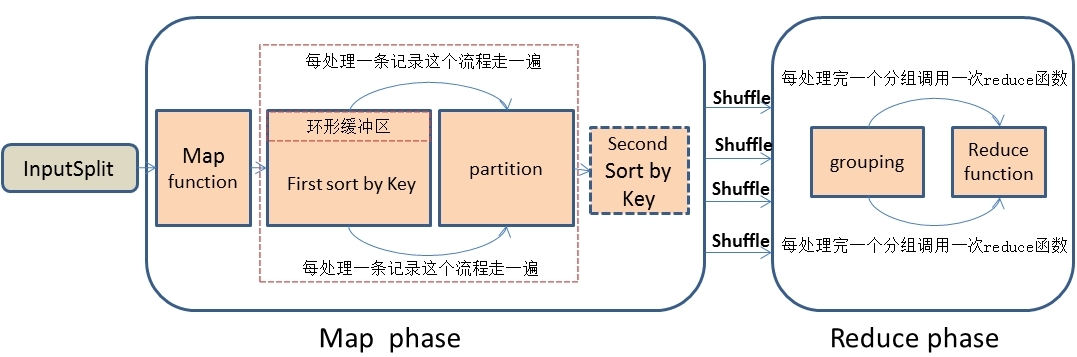

hadoop之MapReduce自定义二次排序流程实例详解

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

|

package com.mr;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.io.WritableComparable;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

/** * 自定义组合键

* @author zenghzhaozheng

*/

public class CombinationKey implements WritableComparable<CombinationKey>{

private static final Logger logger = LoggerFactory.getLogger(CombinationKey.class);

private Text firstKey;

private IntWritable secondKey;

public CombinationKey() {

this.firstKey = new Text();

this.secondKey = new IntWritable();

}

public Text getFirstKey() {

return this.firstKey;

}

public void setFirstKey(Text firstKey) {

this.firstKey = firstKey;

}

public IntWritable getSecondKey() {

return this.secondKey;

}

public void setSecondKey(IntWritable secondKey) {

this.secondKey = secondKey;

}

@Override

public void readFields(DataInput dateInput) throws IOException {

// TODO Auto-generated method stub

this.firstKey.readFields(dateInput);

this.secondKey.readFields(dateInput);

}

@Override

public void write(DataOutput outPut) throws IOException {

this.firstKey.write(outPut);

this.secondKey.write(outPut);

}

/**

* 自定义比较策略

* 注意:该比较策略用于mapreduce的第一次默认排序,也就是发生在map阶段的sort小阶段,

* 发生地点为环形缓冲区(可以通过io.sort.mb进行大小调整)

*/

@Override

public int compareTo(CombinationKey combinationKey) {

logger.info("-------CombinationKey flag-------");

return this.firstKey.compareTo(combinationKey.getFirstKey());

}

} |

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

|

package com.mr.secondSort;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.mapreduce.Partitioner;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

/** * 自定义分区

* @author zengzhaozheng

*/

public class DefinedPartition extends Partitioner<CombinationKey,IntWritable>{

private static final Logger logger = LoggerFactory.getLogger(DefinedPartition.class);

/**

* 数据输入来源:map输出

* @author zengzhaozheng

* @param key map输出键值

* @param value map输出value值

* @param numPartitions 分区总数,即reduce task个数

*/

@Override

public int getPartition(CombinationKey key, IntWritable value,int numPartitions) {

logger.info("--------enter DefinedPartition flag--------");

/**

* 注意:这里采用默认的hash分区实现方法

* 根据组合键的第一个值作为分区

* 这里需要说明一下,如果不自定义分区的话,mapreduce框架会根据默认的hash分区方法,

* 将整个组合将相等的分到一个分区中,这样的话显然不是我们要的效果

*/

logger.info("--------out DefinedPartition flag--------");

/**

* 此处的分区方法选择比较重要,其关系到是否会产生严重的数据倾斜问题

* 采取什么样的分区方法要根据自己的数据分布情况来定,尽量将不同key的数据打散

* 分散到各个不同的reduce进行处理,实现最大程度的分布式处理。

*/

return (key.getFirstKey().hashCode()&Integer.MAX_VALUE)%numPartitions;

}

} |

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

|

package com.mr;

import org.apache.hadoop.io.WritableComparable;

import org.apache.hadoop.io.WritableComparator;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

/** * 自定义二次排序策略

* @author zengzhaoheng

*/

public class DefinedComparator extends WritableComparator {

private static final Logger logger = LoggerFactory.getLogger(DefinedComparator.class);

public DefinedComparator() {

super(CombinationKey.class,true);

}

@Override

public int compare(WritableComparable combinationKeyOne,

WritableComparable CombinationKeyOther) {

logger.info("---------enter DefinedComparator flag---------");

CombinationKey c1 = (CombinationKey) combinationKeyOne;

CombinationKey c2 = (CombinationKey) CombinationKeyOther;

/**

* 确保进行排序的数据在同一个区内,如果不在同一个区则按照组合键中第一个键排序

* 另外,这个判断是可以调整最终输出的组合键第一个值的排序

* 下面这种比较对第一个字段的排序是升序的,如果想降序这将c1和c2倒过来(假设1)

*/

if(!c1.getFirstKey().equals(c2.getFirstKey())){

logger.info("---------out DefinedComparator flag---------");

return c1.getFirstKey().compareTo(c2.getFirstKey());

}

else{//按照组合键的第二个键的升序排序,将c1和c2倒过来则是按照数字的降序排序(假设2)

logger.info("---------out DefinedComparator flag---------");

return c1.getSecondKey().get()-c2.getSecondKey().get();//0,负数,正数

}

/**

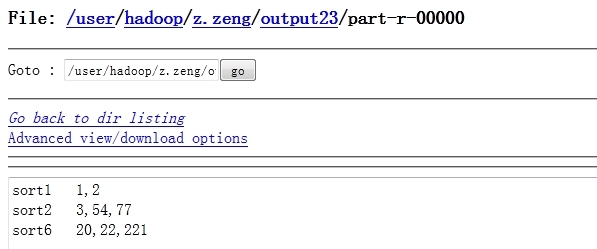

* (1)按照上面的这种实现最终的二次排序结果为:

* sort1 1,2

* sort2 3,54,77

* sort6 20,22,221

* (2)如果实现假设1,则最终的二次排序结果为:

* sort6 20,22,221

* sort2 3,54,77

* sort1 1,2

* (3)如果实现假设2,则最终的二次排序结果为:

* sort1 2,1

* sort2 77,54,3

* sort6 221,22,20

*/

}

} |

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

|

package com.mr;

import org.apache.hadoop.io.WritableComparable;

import org.apache.hadoop.io.WritableComparator;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

/** * 自定义分组策略

* 将组合将中第一个值相同的分在一组

* @author zengzhaozheng

*/

public class DefinedGroupSort extends WritableComparator{

private static final Logger logger = LoggerFactory.getLogger(DefinedGroupSort.class);

public DefinedGroupSort() {

super(CombinationKey.class,true);

}

@Override

public int compare(WritableComparable a, WritableComparable b) {

logger.info("-------enter DefinedGroupSort flag-------");

CombinationKey ck1 = (CombinationKey)a;

CombinationKey ck2 = (CombinationKey)b;

logger.info("-------Grouping result:"+ck1.getFirstKey().

compareTo(ck2.getFirstKey())+"-------");

logger.info("-------out DefinedGroupSort flag-------");

return ck1.getFirstKey().compareTo(ck2.getFirstKey());

}

} |

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

|

package com.mr;

import java.io.IOException;

import java.util.Iterator;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.lib.input.KeyValueTextInputFormat;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

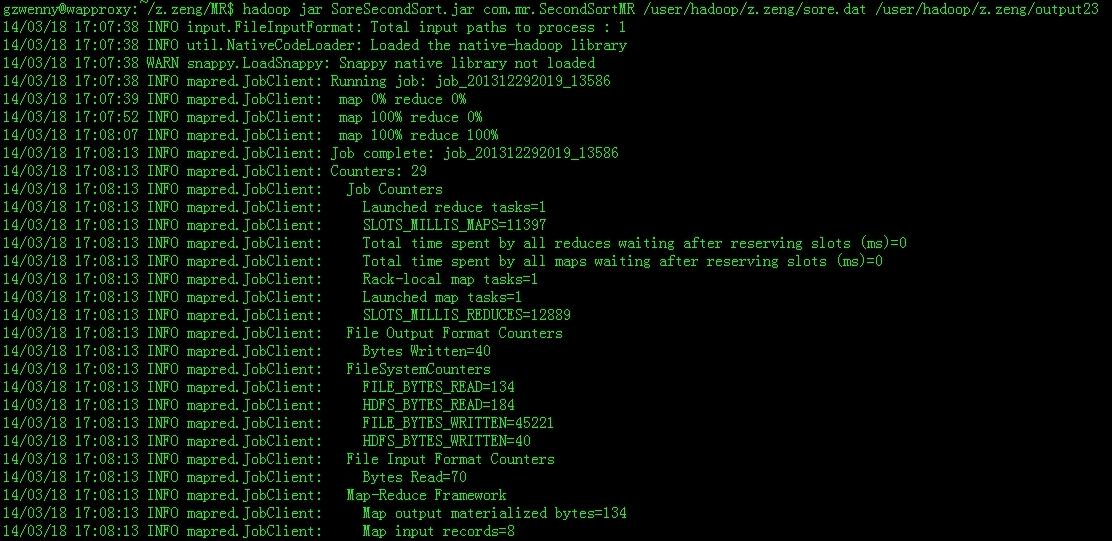

/** * @author zengzhaozheng

*

* 用途说明:二次排序mapreduce

* 需求描述:

* ---------------输入-----------------

* sort1,1

* sort2,3

* sort2,77

* sort2,54

* sort1,2

* sort6,22

* sort6,221

* sort6,20

* ---------------目标输出---------------

* sort1 1,2

* sort2 3,54,77

* sort6 20,22,221

*/

public class SecondSortMR extends Configured implements Tool {

private static final Logger logger = LoggerFactory.getLogger(SecondSortMR.class);

public static class SortMapper extends Mapper<Text, Text, CombinationKey, IntWritable> {

//---------------------------------------------------------

/**

* 这里特殊要说明一下,为什么要将这些变量写在map函数外边。

* 对于分布式的程序,我们一定要注意到内存的使用情况,对于mapreduce框架,

* 每一行的原始记录的处理都要调用一次map函数,假设,此个map要处理1亿条输

* 入记录,如果将这些变量都定义在map函数里边则会导致这4个变量的对象句柄编

* 程非常多(极端情况下将产生4*1亿个句柄,当然java也是有自动的gc机制的,

* 一定不会达到这么多,但是会浪费很多时间去GC),导致栈内存被浪费掉。我们将其写在map函数外边,

* 顶多就只有4个对象句柄。

*/

CombinationKey combinationKey = new CombinationKey();

Text sortName = new Text();

IntWritable score = new IntWritable();

String[] inputString = null;

//---------------------------------------------------------

@Override

protected void map(Text key, Text value, Context context)

throws IOException, InterruptedException {

logger.info("---------enter map function flag---------");

//过滤非法记录

if(key == null || value == null || key.toString().equals("")

|| value.equals("")){

return;

}

sortName.set(key.toString());

score.set(Integer.parseInt(value.toString()));

combinationKey.setFirstKey(sortName);

combinationKey.setSecondKey(score);

//map输出

context.write(combinationKey, score);

logger.info("---------out map function flag---------");

}

}

public static class SortReducer extends

Reducer<CombinationKey, IntWritable, Text, Text> {

StringBuffer sb = new StringBuffer();

Text sore = new Text();

/**

* 这里要注意一下reduce的调用时机和次数:reduce每处理一个分组的时候会调用一

* 次reduce函数。也许有人会疑问,分组是什么?看个例子就明白了:

* eg:

* {{sort1,{1,2}},{sort2,{3,54,77}},{sort6,{20,22,221}}}

* 这个数据结果是分组过后的数据结构,那么一个分组分别为{sort1,{1,2}}、

* {sort2,{3,54,77}}、{sort6,{20,22,221}}

*/

@Override

protected void reduce(CombinationKey key,

Iterable<IntWritable> value, Context context)

throws IOException, InterruptedException {

sb.delete(0, sb.length());//先清除上一个组的数据

Iterator<IntWritable> it = value.iterator();

while(it.hasNext()){

sb.append(it.next()+",");

}

//去除最后一个逗号

if(sb.length()>0){

sb.deleteCharAt(sb.length()-1);

}

sore.set(sb.toString());

context.write(key.getFirstKey(),sore);

logger.info("---------enter reduce function flag---------");

logger.info("reduce Input data:{["+key.getFirstKey()+","+

key.getSecondKey()+"],["+sore+"]}");

logger.info("---------out reduce function flag---------");

}

}

@Override

public int run(String[] args) throws Exception {

Configuration conf=getConf(); //获得配置文件对象

Job job=new Job(conf,"SoreSort");

job.setJarByClass(SecondSortMR.class);

FileInputFormat.addInputPath(job, new Path(args[0])); //设置map输入文件路径

FileOutputFormat.setOutputPath(job, new Path(args[1])); //设置reduce输出文件路径

job.setMapperClass(SortMapper.class);

job.setReducerClass(SortReducer.class);

job.setPartitionerClass(DefinedPartition.class); //设置自定义分区策略

job.setGroupingComparatorClass(DefinedGroupSort.class); //设置自定义分组策略

job.setSortComparatorClass(DefinedComparator.class); //设置自定义二次排序策略

job.setInputFormatClass(KeyValueTextInputFormat.class); //设置文件输入格式

job.setOutputFormatClass(TextOutputFormat.class);//使用默认的output格式

//设置map的输出key和value类型

job.setMapOutputKeyClass(CombinationKey.class);

job.setMapOutputValueClass(IntWritable.class);

//设置reduce的输出key和value类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

job.waitForCompletion(true);

return job.isSuccessful()?0:1;

}

public static void main(String[] args) {

try {

int returnCode = ToolRunner.run(new SecondSortMR(),args);

System.exit(returnCode);

} catch (Exception e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

}

} |

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

|

2014-03-18 17:07:45,278 INFO org.apache.hadoop.util.NativeCodeLoader: Loaded the native-hadoop library

2014-03-18 17:07:45,432 WARN org.apache.hadoop.metrics2.impl.MetricsSystemImpl: Source name ugi already exists!

2014-03-18 17:07:45,501 INFO org.apache.hadoop.util.ProcessTree: setsid exited with exit code 0

2014-03-18 17:07:45,506 INFO org.apache.hadoop.mapred.Task: Using ResourceCalculatorPlugin : org.apache.hadoop.util.LinuxResourceCalculatorPlugin@69b01afa

2014-03-18 17:07:45,584 INFO org.apache.hadoop.mapred.MapTask: io.sort.mb = 100

2014-03-18 17:07:45,618 INFO org.apache.hadoop.mapred.MapTask: data buffer = 79691776/99614720

2014-03-18 17:07:45,618 INFO org.apache.hadoop.mapred.MapTask: record buffer = 262144/327680

2014-03-18 17:07:45,626 WARN org.apache.hadoop.io.compress.snappy.LoadSnappy: Snappy native library not loaded

2014-03-18 17:07:45,634 INFO com.mr.SecondSortMR: ---------enter map function flag---------

2014-03-18 17:07:45,634 INFO com.mr.DefinedPartition: --------enter DefinedPartition flag--------

2014-03-18 17:07:45,634 INFO com.mr.DefinedPartition: --------out DefinedPartition flag--------

2014-03-18 17:07:45,634 INFO com.mr.SecondSortMR: ---------out map function flag---------

2014-03-18 17:07:45,634 INFO com.mr.SecondSortMR: ---------enter map function flag---------

2014-03-18 17:07:45,635 INFO com.mr.DefinedPartition: --------enter DefinedPartition flag--------

2014-03-18 17:07:45,635 INFO com.mr.DefinedPartition: --------out DefinedPartition flag--------

2014-03-18 17:07:45,635 INFO com.mr.SecondSortMR: ---------out map function flag---------

2014-03-18 17:07:45,635 INFO com.mr.SecondSortMR: ---------enter map function flag---------

2014-03-18 17:07:45,635 INFO com.mr.DefinedPartition: --------enter DefinedPartition flag--------

2014-03-18 17:07:45,635 INFO com.mr.DefinedPartition: --------out DefinedPartition flag--------

2014-03-18 17:07:45,635 INFO com.mr.SecondSortMR: ---------out map function flag---------

2014-03-18 17:07:45,635 INFO com.mr.SecondSortMR: ---------enter map function flag---------

2014-03-18 17:07:45,635 INFO com.mr.DefinedPartition: --------enter DefinedPartition flag--------

2014-03-18 17:07:45,635 INFO com.mr.DefinedPartition: --------out DefinedPartition flag--------

2014-03-18 17:07:45,635 INFO com.mr.SecondSortMR: ---------out map function flag---------

2014-03-18 17:07:45,635 INFO com.mr.SecondSortMR: ---------enter map function flag---------

2014-03-18 17:07:45,636 INFO com.mr.DefinedPartition: --------enter DefinedPartition flag--------

2014-03-18 17:07:45,636 INFO com.mr.DefinedPartition: --------out DefinedPartition flag--------

2014-03-18 17:07:45,636 INFO com.mr.SecondSortMR: ---------out map function flag---------

2014-03-18 17:07:45,636 INFO com.mr.SecondSortMR: ---------enter map function flag---------

2014-03-18 17:07:45,636 INFO com.mr.DefinedPartition: --------enter DefinedPartition flag--------

2014-03-18 17:07:45,636 INFO com.mr.DefinedPartition: --------out DefinedPartition flag--------

2014-03-18 17:07:45,636 INFO com.mr.SecondSortMR: ---------out map function flag---------

2014-03-18 17:07:45,636 INFO com.mr.SecondSortMR: ---------enter map function flag---------

2014-03-18 17:07:45,636 INFO com.mr.DefinedPartition: --------enter DefinedPartition flag--------

2014-03-18 17:07:45,636 INFO com.mr.DefinedPartition: --------out DefinedPartition flag--------

2014-03-18 17:07:45,636 INFO com.mr.SecondSortMR: ---------out map function flag---------

2014-03-18 17:07:45,636 INFO com.mr.SecondSortMR: ---------enter map function flag---------

2014-03-18 17:07:45,637 INFO com.mr.DefinedPartition: --------enter DefinedPartition flag--------

2014-03-18 17:07:45,637 INFO com.mr.DefinedPartition: --------out DefinedPartition flag--------

2014-03-18 17:07:45,637 INFO com.mr.SecondSortMR: ---------out map function flag---------

2014-03-18 17:07:45,637 INFO org.apache.hadoop.mapred.MapTask: Starting flush of map output

2014-03-18 17:07:45,651 INFO com.mr.DefinedComparator: ---------enter DefinedComparator flag---------

2014-03-18 17:07:45,651 INFO com.mr.DefinedComparator: ---------out DefinedComparator flag---------

2014-03-18 17:07:45,651 INFO com.mr.DefinedComparator: ---------enter DefinedComparator flag---------

2014-03-18 17:07:45,651 INFO com.mr.DefinedComparator: ---------out DefinedComparator flag---------

2014-03-18 17:07:45,651 INFO com.mr.DefinedComparator: ---------enter DefinedComparator flag---------

2014-03-18 17:07:45,651 INFO com.mr.DefinedComparator: ---------out DefinedComparator flag---------

2014-03-18 17:07:45,651 INFO com.mr.DefinedComparator: ---------enter DefinedComparator flag---------

2014-03-18 17:07:45,651 INFO com.mr.DefinedComparator: ---------out DefinedComparator flag---------

2014-03-18 17:07:45,651 INFO com.mr.DefinedComparator: ---------enter DefinedComparator flag---------

2014-03-18 17:07:45,651 INFO com.mr.DefinedComparator: ---------out DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------enter DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------out DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------enter DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------out DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------enter DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------out DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------enter DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------out DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------enter DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------out DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------enter DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------out DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------enter DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------out DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------enter DefinedComparator flag---------

2014-03-18 17:07:45,652 INFO com.mr.DefinedComparator: ---------out DefinedComparator flag---------

2014-03-18 17:07:45,656 INFO org.apache.hadoop.mapred.MapTask: Finished spill 0

2014-03-18 17:07:45,661 INFO org.apache.hadoop.mapred.Task: Task:attempt_201312292019_13586_m_000000_0 is done. And is in the process of commiting

2014-03-18 17:07:48,494 INFO org.apache.hadoop.mapred.Task: Task 'attempt_201312292019_13586_m_000000_0' done.

2014-03-18 17:07:48,526 INFO org.apache.hadoop.mapred.TaskLogsTruncater: Initializing logs' truncater with mapRetainSize=-1 and reduceRetainSize=-1

2014-03-18 17:07:48,548 INFO org.apache.hadoop.io.nativeio.NativeIO: Initialized cache for UID to User mapping with a cache timeout of 14400 seconds.

2014-03-18 17:07:48,548 INFO org.apache.hadoop.io.nativeio.NativeIO: Got UserName hadoop for UID 1000 from the native implementation

|

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

|

2014-03-18 17:07:51,266 INFO org.apache.hadoop.util.NativeCodeLoader: Loaded the native-hadoop library

2014-03-18 17:07:51,418 WARN org.apache.hadoop.metrics2.impl.MetricsSystemImpl: Source name ugi already exists!

2014-03-18 17:07:51,486 INFO org.apache.hadoop.util.ProcessTree: setsid exited with exit code 0

2014-03-18 17:07:51,491 INFO org.apache.hadoop.mapred.Task: Using ResourceCalculatorPlugin : org.apache.hadoop.util.LinuxResourceCalculatorPlugin@28bb494b

2014-03-18 17:07:51,537 INFO org.apache.hadoop.mapred.ReduceTask: ShuffleRamManager: MemoryLimit=195749472, MaxSingleShuffleLimit=48937368

2014-03-18 17:07:51,542 INFO org.apache.hadoop.mapred.ReduceTask: attempt_201312292019_13586_r_000000_0 Thread started: Thread for merging on-disk files

2014-03-18 17:07:51,542 INFO org.apache.hadoop.mapred.ReduceTask: attempt_201312292019_13586_r_000000_0 Thread started: Thread for merging in memory files

2014-03-18 17:07:51,542 INFO org.apache.hadoop.mapred.ReduceTask: attempt_201312292019_13586_r_000000_0 Thread waiting: Thread for merging on-disk files

2014-03-18 17:07:51,543 INFO org.apache.hadoop.mapred.ReduceTask: attempt_201312292019_13586_r_000000_0 Need another 1 map output(s) where 0 is already in progress

2014-03-18 17:07:51,543 INFO org.apache.hadoop.mapred.ReduceTask: attempt_201312292019_13586_r_000000_0 Thread started: Thread for polling Map Completion Events

2014-03-18 17:07:51,543 INFO org.apache.hadoop.mapred.ReduceTask: attempt_201312292019_13586_r_000000_0 Scheduled 0 outputs (0 slow hosts and0 dup hosts)

2014-03-18 17:07:56,544 INFO org.apache.hadoop.mapred.ReduceTask: attempt_201312292019_13586_r_000000_0 Scheduled 1 outputs (0 slow hosts and0 dup hosts)

2014-03-18 17:07:57,553 INFO org.apache.hadoop.mapred.ReduceTask: GetMapEventsThread exiting

2014-03-18 17:07:57,553 INFO org.apache.hadoop.mapred.ReduceTask: getMapsEventsThread joined.

2014-03-18 17:07:57,553 INFO org.apache.hadoop.mapred.ReduceTask: Closed ram manager

2014-03-18 17:07:57,553 INFO org.apache.hadoop.mapred.ReduceTask: Interleaved on-disk merge complete: 0 files left.

2014-03-18 17:07:57,553 INFO org.apache.hadoop.mapred.ReduceTask: In-memory merge complete: 1 files left.

2014-03-18 17:07:57,577 INFO org.apache.hadoop.mapred.Merger: Merging 1 sorted segments

2014-03-18 17:07:57,577 INFO org.apache.hadoop.mapred.Merger: Down to the last merge-pass, with 1 segments left of total size: 130 bytes

2014-03-18 17:07:57,583 INFO org.apache.hadoop.mapred.ReduceTask: Merged 1 segments, 130 bytes to disk to satisfy reduce memory limit

2014-03-18 17:07:57,584 INFO org.apache.hadoop.mapred.ReduceTask: Merging 1 files, 134 bytes from disk

2014-03-18 17:07:57,584 INFO org.apache.hadoop.mapred.ReduceTask: Merging 0 segments, 0 bytes from memory into reduce

2014-03-18 17:07:57,584 INFO org.apache.hadoop.mapred.Merger: Merging 1 sorted segments

2014-03-18 17:07:57,586 INFO org.apache.hadoop.mapred.Merger: Down to the last merge-pass, with 1 segments left of total size: 130 bytes

2014-03-18 17:07:57,599 INFO com.mr.DefinedGroupSort: -------enter DefinedGroupSort flag-------

2014-03-18 17:07:57,599 INFO com.mr.DefinedGroupSort: -------Grouping result:0-------

2014-03-18 17:07:57,599 INFO com.mr.DefinedGroupSort: -------out DefinedGroupSort flag-------

2014-03-18 17:07:57,599 INFO com.mr.DefinedGroupSort: -------enter DefinedGroupSort flag-------

2014-03-18 17:07:57,599 INFO com.mr.DefinedGroupSort: -------Grouping result:-1-------

2014-03-18 17:07:57,599 INFO com.mr.DefinedGroupSort: -------out DefinedGroupSort flag-------

2014-03-18 17:07:57,600 INFO com.mr.SecondSortMR: ---------enter reduce function flag---------

2014-03-18 17:07:57,600 INFO com.mr.SecondSortMR: reduce Input data:{[sort1,2],[1,2]}

2014-03-18 17:07:57,600 INFO com.mr.SecondSortMR: ---------out reduce function flag---------

2014-03-18 17:07:57,600 INFO com.mr.DefinedGroupSort: -------enter DefinedGroupSort flag-------

2014-03-18 17:07:57,600 INFO com.mr.DefinedGroupSort: -------Grouping result:0-------

2014-03-18 17:07:57,600 INFO com.mr.DefinedGroupSort: -------out DefinedGroupSort flag-------

2014-03-18 17:07:57,600 INFO com.mr.DefinedGroupSort: -------enter DefinedGroupSort flag-------

2014-03-18 17:07:57,600 INFO com.mr.DefinedGroupSort: -------Grouping result:0-------

2014-03-18 17:07:57,600 INFO com.mr.DefinedGroupSort: -------out DefinedGroupSort flag-------

2014-03-18 17:07:57,601 INFO com.mr.DefinedGroupSort: -------enter DefinedGroupSort flag-------

2014-03-18 17:07:57,601 INFO com.mr.DefinedGroupSort: -------Grouping result:-4-------

2014-03-18 17:07:57,601 INFO com.mr.DefinedGroupSort: -------out DefinedGroupSort flag-------

2014-03-18 17:07:57,601 INFO com.mr.SecondSortMR: ---------enter reduce function flag---------

2014-03-18 17:07:57,601 INFO com.mr.SecondSortMR: reduce Input data:{[sort2,77],[3,54,77]}

2014-03-18 17:07:57,601 INFO com.mr.SecondSortMR: ---------out reduce function flag---------

2014-03-18 17:07:57,601 INFO com.mr.DefinedGroupSort: -------enter DefinedGroupSort flag-------

2014-03-18 17:07:57,601 INFO com.mr.DefinedGroupSort: -------Grouping result:0-------

2014-03-18 17:07:57,601 INFO com.mr.DefinedGroupSort: -------out DefinedGroupSort flag-------

2014-03-18 17:07:57,601 INFO com.mr.DefinedGroupSort: -------enter DefinedGroupSort flag-------

2014-03-18 17:07:57,601 INFO com.mr.DefinedGroupSort: -------Grouping result:0-------

2014-03-18 17:07:57,601 INFO com.mr.DefinedGroupSort: -------out DefinedGroupSort flag-------

2014-03-18 17:07:57,601 INFO com.mr.SecondSortMR: ---------enter reduce function flag---------

2014-03-18 17:07:57,601 INFO com.mr.SecondSortMR: reduce Input data:{[sort6,221],[20,22,221]}

2014-03-18 17:07:57,601 INFO com.mr.SecondSortMR: ---------out reduce function flag---------

2014-03-18 17:07:57,641 INFO org.apache.hadoop.mapred.Task: Task:attempt_201312292019_13586_r_000000_0 is done. And is in the process of commiting

2014-03-18 17:08:00,668 INFO org.apache.hadoop.mapred.Task: Task attempt_201312292019_13586_r_000000_0 is allowed to commit now

2014-03-18 17:08:00,682 INFO org.apache.hadoop.mapreduce.lib.output.FileOutputCommitter: Saved output of task 'attempt_201312292019_13586_r_000000_0' to /user/hadoop/z.zeng/output23

2014-03-18 17:08:03,593 INFO org.apache.hadoop.mapred.Task: Task 'attempt_201312292019_13586_r_000000_0' done.

2014-03-18 17:08:03,596 INFO org.apache.hadoop.mapred.TaskLogsTruncater: Initializing logs' truncater with mapRetainSize=-1 and reduceRetainSize=-1

2014-03-18 17:08:03,615 INFO org.apache.hadoop.io.nativeio.NativeIO: Initialized cache for UID to User mapping with a cache timeout of 14400 seconds.

2014-03-18 17:08:03,615 INFO org.apache.hadoop.io.nativeio.NativeIO: Got UserName hadoop for UID 1000 from the native implementation

|

相关推荐

赠送jar包:hadoop-mapreduce-client-core-2.5.1.jar; 赠送原API文档:hadoop-mapreduce-client-core-2.5.1-javadoc.jar; 赠送源代码:hadoop-mapreduce-client-core-2.5.1-sources.jar; 赠送Maven依赖信息文件:...

赠送jar包:hadoop-mapreduce-client-jobclient-2.6.5.jar; 赠送原API文档:hadoop-mapreduce-client-jobclient-2.6.5-javadoc.jar; 赠送源代码:hadoop-mapreduce-client-jobclient-2.6.5-sources.jar; 赠送...

hadoop-mapreduce-examples-2.7.1.jar

赠送jar包:hadoop-mapreduce-client-app-2.6.5.jar; 赠送原API文档:hadoop-mapreduce-client-app-2.6.5-javadoc.jar; 赠送源代码:hadoop-mapreduce-client-app-2.6.5-sources.jar; 赠送Maven依赖信息文件:...

Hadoop 用mapreduce实现Wordcount实例,绝对能用

赠送jar包:hadoop-mapreduce-client-jobclient-2.6.5.jar; 赠送原API文档:hadoop-mapreduce-client-jobclient-2.6.5-javadoc.jar; 赠送源代码:hadoop-mapreduce-client-jobclient-2.6.5-sources.jar; 赠送...

赠送jar包:hadoop-mapreduce-client-app-2.6.5.jar; 赠送原API文档:hadoop-mapreduce-client-app-2.6.5-javadoc.jar; 赠送源代码:hadoop-mapreduce-client-app-2.6.5-sources.jar; 赠送Maven依赖信息文件:...

赠送jar包:hadoop-mapreduce-client-core-2.7.3.jar; 赠送原API文档:hadoop-mapreduce-client-core-2.7.3-javadoc.jar; 赠送源代码:hadoop-mapreduce-client-core-2.7.3-sources.jar; 赠送Maven依赖信息文件:...

赠送jar包:hadoop-mapreduce-client-app-2.7.3.jar; 赠送原API文档:hadoop-mapreduce-client-app-2.7.3-javadoc.jar; 赠送源代码:hadoop-mapreduce-client-app-2.7.3-sources.jar; 赠送Maven依赖信息文件:...

一个自己写的Hadoop MapReduce实例源码,网上看到不少网友在学习MapReduce编程,但是除了wordcount范例外实例比较少,故上传自己的一个。包含完整实例源码,编译配置文件,测试数据,可执行jar文件,执行脚本及操作...

赠送jar包:hadoop-mapreduce-client-core-2.6.5.jar 赠送原API文档:hadoop-mapreduce-client-core-2.6.5-javadoc.jar 赠送源代码:hadoop-mapreduce-client-core-2.6.5-sources.jar 包含翻译后的API文档:...

Ubuntu系统上Hadoop与MapReduce 运行实例

hadoop-mapreduce-examples-2.6.5.jar 官方案例源码

包org.apache.hadoop.mapreduce的Hadoop源代码分析

mapreduce二次排序,年份升序,按照年份聚合,气温降序

MapReduce is the distribution system that the Hadoop MapReduce engine uses to distribute work around a cluster by working parallel on smaller data sets. It is useful in a wide range of applications, ...

Hadoop实现了一个分布式文件系统(Hadoop Distributed File System),简称HDFS。HDFS有高容错性的特点,并且设计用来部署在低廉的(low-cost)硬件上;而且它提供高吞吐量(high throughput)来访问应用程序的数据...

尚硅谷大数据技术之Hadoop-Mapreduce

大数据技术之Hadoop(MapReduce)

java操作hadoop之mapreduce计算整数的最大值和最小值实战源码,附带全部所需jar包,欢迎下载一起学习。